Table of Contents

What is Generative AI?

Academic Integrity & Misuse

Misinformation & Inaccuracy

Social Impacts

Privacy & Data Security

Copyright & Intellectual Property

Accessibility & Equity Considerations

Institutional Policies

Additional Resources

Note: This article includes information generated with assistance from Microsoft Copilot.

What is Generative AI?

Introduction

Generative artificial intelligence (AI) tools—such as ChatGPT, Copilot, Gemini, Claude, and DALL·E—are transforming the way we learn, teach, and create. These tools can generate text, images, code, and more. Used well, AI can support creativity, learning, and idea development. Used poorly, AI can have serious and negative consequences.

Approved Tools

WSU, in partership with Minnesota State, has approved Microsoft Copilot as a safe AI tool. Our license with Microsoft ensures that data will not be used to train models or be shared outside of our instance. Signing in with your StarID ensures that your interactions with Copilot are protected within the university's secure Microsoft 365 environment.

Copilot is free for students and employees when you sign in with your StarID.

Note: Be sure to log into Copilot with your StarID.

Data on Usage

Research over the past year found that the majority of college students are using AI in their school work, with many students using it weekly or daily. Most students are using AI as a search engine, to check grammar, and to summarize documents. Faculty are also using AI to support their work. However, most faculty use AI minimally (although many report expecting to increase their usage in the future). Faculty tend to use AI for help with curriculum design and automating administrative duties. Both faculty and students share concerns about academic integrity and the potential for misuse of AI, and ultimately share concerns about student learning.

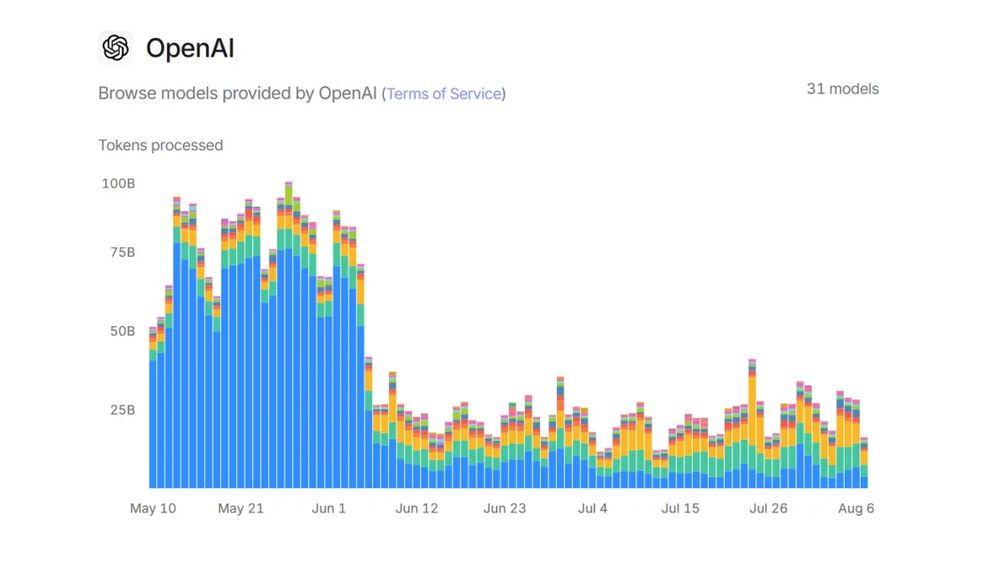

Use of AI among students and faculty is a notable share of all use. OpenAI shared that chatbot usage dropped significantly when schools let out for the summer. The image below shows the number of tokens processes (meaning the usage) dropping at the end of May. Similar (but smaller) drops can be seen during weekends and school breaks.

Academic Integrity & Misuse

Plagiarism

AI use has changed the way students approach learning, writing, and research. While AI tools can be useful resources for brainstorming, drafting, or revising, they also raise serious questions about the role of AI in academic integrity. When is AI use, AI misuse? The line between acceptable and unacceptable use is blurry. Research finds that students generally agree that submitting work generated by AI is inappropriate. However, for smaller tasks (like checking grammar), students agree that using AI is ok.

When in doubt, students should refer to the class syllabus or campus integrity policy or ask their instructor.

Attribution

When using AI in your work, it is important to cite AI-generated content and acknowledge that it is not your own.

APA (7th edition)

Reference List

Microsoft Copilot. (2025, October 31). Response to query about how to cite AI-generated content [Generative AI chat]. Microsoft. https://copilot.microsoft.com

In-text Citation

(Microsoft Copilot, 2025)

MLA (9th edition)

Works Cited

"Response to query about how to cite AI-generated content." Microsoft Copilot, 31 Oct. 2025, https://copilot.microsoft.com

In-text Citation

("Response to query")

Chicago (17th edition)

Footnote

Microsoft Copilot, Response to query about how to cite AI-generated content, October 31, 2025, https://copilot.microsoft.com

Turnitin

Winona State University has a license with Turnitin, a plagiarism detection tool. This tool compares submitted work against a large database of other student papers, websites, and academic publications. It creates a "similarity report" (0-100%) indicating an estimated level of plagiarism. Turnitin also estimates the likelihood that the submission was generated by AI. Faculty may activate this tool within D2L Brightspace assignments.

Misinformation & Inaccuracy

Hallucinations

AI hallucinations refer to the phenomenon where large language models (LLMs), such as ChatGPT or Gemini, generate confident yet factually incorrect or entirely fabricated responses—ranging from fake citations to invented historical events. These hallucinations stem from how models are trained: they predict the next most probable token without understanding truth, meaning that even with perfect data, some level of hallucination is mathematically inevitable. Recent studies stress that while models may seem fluent, their outputs can mislead—especially when addressing niche, long-tail information not well represented in their training sets.

There are plenty of recent and public examples of AI hallucinations, including when lawyers used AI to prepare for a case and their AI tool provided non-existent legal precedents or when a researcher published a paper with more than 20 fake citations. But most hallucinations are factual errors - claiming Toronto is the capital of Canada or that humans have never landed on the moon. These errors can have serious implications - from getting a quiz question wrong to legal action.

How can you reduce the likelihood of AI hallucinations?

- Use common sense and your own critical thinking. Double-check sources and confirm data and information with a second source.

- Ask your AI tool to include citations in responses.

- Use clear and specific prompts.

Outdated & Biased Information

AI tools are often trained on the whole of the Internet, from reliable and trust-worthy sources to your neighbor's blog about politics. Because the Internet is full of old, bad, and biased information, so too might be your AI results. AI systems may exhibit “algorithmic exclusion,” failing to represent groups that are underrepresented or absent in the underlying datasets—perpetuating inequities not merely by bias, but by erasure. These limitations highlight why it’s critical to treat AI outputs with caution, cross-check them with current and unbiased sources, and prefer tools that integrate up-to-date data or allow users to manually refresh their knowledge base.

Examples

- In hiring, Amazon’s now-discontinued AI recruitment tool notoriously favored male candidates over female ones, simply because it learned from a decade of biased résumé data—explicitly encoding historical inequities into its algorithm.

- In 2015, Google’s AI-powered photo categorization tool mislabeled images of Black individuals as gorillas.

Social Impacts

Environmental Impacts

AI tools require huge physical infrastructure, including buildings and the land they are built on, as well as the electronics used, the water used to cool the systems, and the e-waste created. Additionally, training and running large AI models require significant energy resources, which contribute to carbon emissions.

Each query in ChatGPT emits 4.32 grams of Carbon Dioxide (CO2). While this is not much individually, combined over the day or year, this equates to a lot of emissions. Forbes notes that the training for an early version of ChatGPT generated the same CO2 emissions as 120 hours for a full year. CO2 is a greenhouse gas, trapping heat in the atmosphere, and contributing to the warming of the Earth.

AI hardware requires the use of rare earth elements, including neodymium, gallium, and lithium. These elements are mined primarily in China, requiring transportation to the US. This mining process is environmentally-damaging.

Economic & Labor Impacts

As AI evolves and grows, it may have disruptive impacts on the labor market and economy. Some routine or repetitive tasks (such as data entry or line work) may become automated, reducing the human labor force. Some tasks may instead be supplemented with AI, like teachers being able to create personalized lesson plans for students. And other jobs may be created for AI, such as in development and training.

AI use may increase productivity in some fields, like manufacturing and finance, bu automating tasks and optimizing processes. This efficiency may lead to lower prices for consumers. However, this may also result in fewer jobs, especially in lower-wage sectors. Workers may consider re-skilling to be more marketable in these new fields.

Privacy & Data Security

As AI tools become more common in teaching, learning, and research, understanding their privacy and data‑security implications is essential. Data security is a serious issue: Stanford’s 2025 AI Index Report found a 56% increase in AI‑related security incidents in a single year, including data breaches and algorithmic failures that exposed sensitive information. These trends highlight the importance of being intentional about what data we share with AI systems and how those systems store, process, and retain it.

Our license with Copilot provides an extra layer of security; however, you should follow some basic guidelines:

- Avoid sharing personal and sensitive information about yourself, and never share this information about someone else without their permission.

- Use institutional-approved tools.

- Be cautious with uploads that may contain metadata.

- Double check the information provided by AI tools.

- Follow institutional policies.

Copyright & Intellectual Property

Ownership

AI tools raise important copyright questions for anyone creating, teaching, or conducting research at a university. Recent federal guidance continues to affirm that AI‑generated content generally cannot be copyrighted unless a human makes meaningful creative contributions. The U.S. Copyright Office’s 2025 Copyrightability Report reiterates that human authorship is required for copyright protection, meaning that many AI‑only outputs are not eligible for protection under U.S. law.

At the same time, courts are actively shaping the rules around how AI systems may use copyrighted works for training. Several 2025 decisions—including Bartz v. Anthropic and Kadrey v. Meta—began defining the boundaries of fair use in AI training, showing that U.S. courts are not yet aligned on whether training on copyrighted material is permissible.

Practical Examples for Students and Faculty

-

Using AI to draft a research summary. If a student asks an AI tool to “summarize this journal article” and uploads the full PDF, they may unintentionally violate the article’s license terms—especially if the AI system stores or reuses the content. Many publishers prohibit uploading full texts to external tools.

-

AI‑generated images for a class project. A faculty member who uses an AI‑generated image in a presentation cannot claim copyright over the image unless they add substantial creative input. The Copyright Office has made clear that AI‑only images lack human authorship.

-

Training a custom model on course materials. A department experimenting with AI might consider training a model on lecture slides or student submissions. Because these materials are copyrighted, the department must ensure it has the rights to use them for training—especially in light of ongoing litigation about whether such training qualifies as fair use.

-

AI output that resembles copyrighted text. If an AI tool produces text that closely mirrors a copyrighted source—such as a paragraph from a textbook—using that output in a paper or publication could constitute infringement, even if the user did not intentionally copy the original.

Accessibility & Equity Considerations

Unequal Access

Access to AI tools varies widely across socioeconomic and geographic lines. Many advanced r "pro" platforms require paid subscriptions ($20/month or more), which can be prohibitive for students from low-income backgrounds or institutions with limited budgets. A 2025 HEPI survey found that while most undergraduate students reported using AI tools, only a third have tools provided by their institution, leaving most students reliant on free versions with restricted features and slower performance. Globally, cost barriers are even more pronounced: in regions where monthly income is under $200, paying for AI subscriptions is unrealistic. These disparities are compounded by hardware and connectivity gaps—students without reliable laptops or broadband face additional obstacles to accessing AI tools, creating a two-tier system where wealthier students and well-funded schools enjoy advanced capabilities while others are left behind.

Learning Gaps

Unequal access to AI tools translates directly into learning gaps. Students with premium AI access can leverage advanced features for writing support, coding help, and personalized study plans, while peers limited to basic or no AI tools miss out on these advantages. This gap is especially visible in STEM fields and research-heavy courses, where AI can accelerate problem-solving and data analysis. Furthermore, underrepresented groups—such as students from rural areas or low-income schools—are less likely to receive training on responsible AI use, leaving them at a disadvantage compared to peers in well-resourced institutions. These inequities risk widening achievement gaps and reinforcing systemic barriers, making it critical for colleges to provide institutional access, training, and inclusive policies to ensure AI benefits all learners equally.

Institutional Policies

MinnState Guidelines

The Minnesota State System published its guidelines on AI use in 2024.

Additional Resources

Learning More About AI